Higgsfield AI Review: I Tried It (Features, Pricing, Pros & Cons)

Every serious creator eventually hits the same wall with AI video. You write the perfect prompt, press generate, and wait — not with confidence, but with hope. Hope that the character looks the same as last time. Hope that the camera does something intentional. Hope that the physics don't melt your subject's face into something unrecognizable. You are not directing. You are gambling.

Higgsfield AI was built as a direct answer to that frustration.

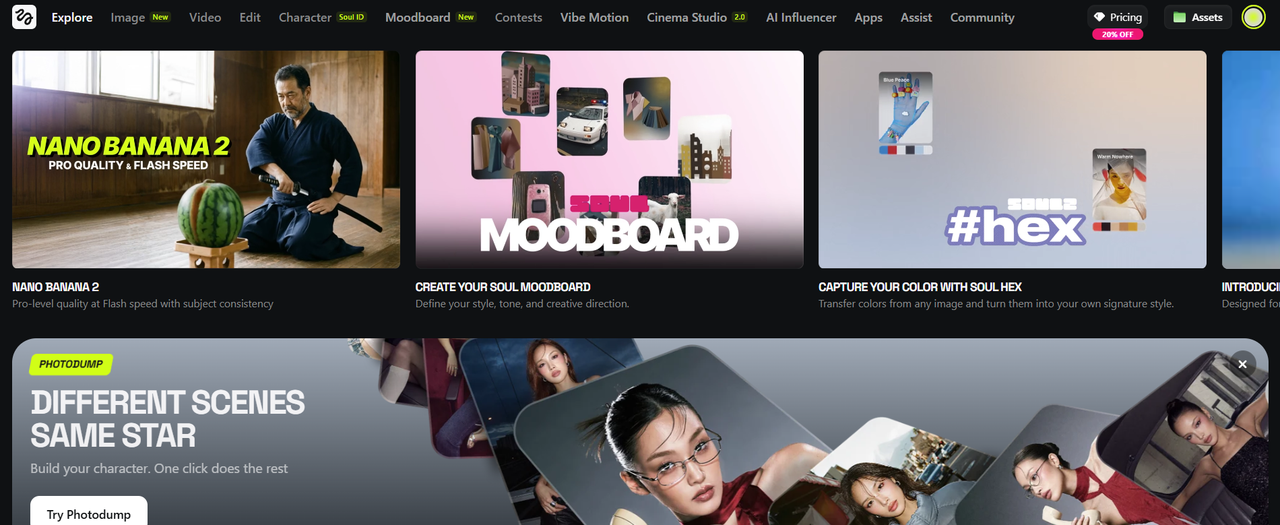

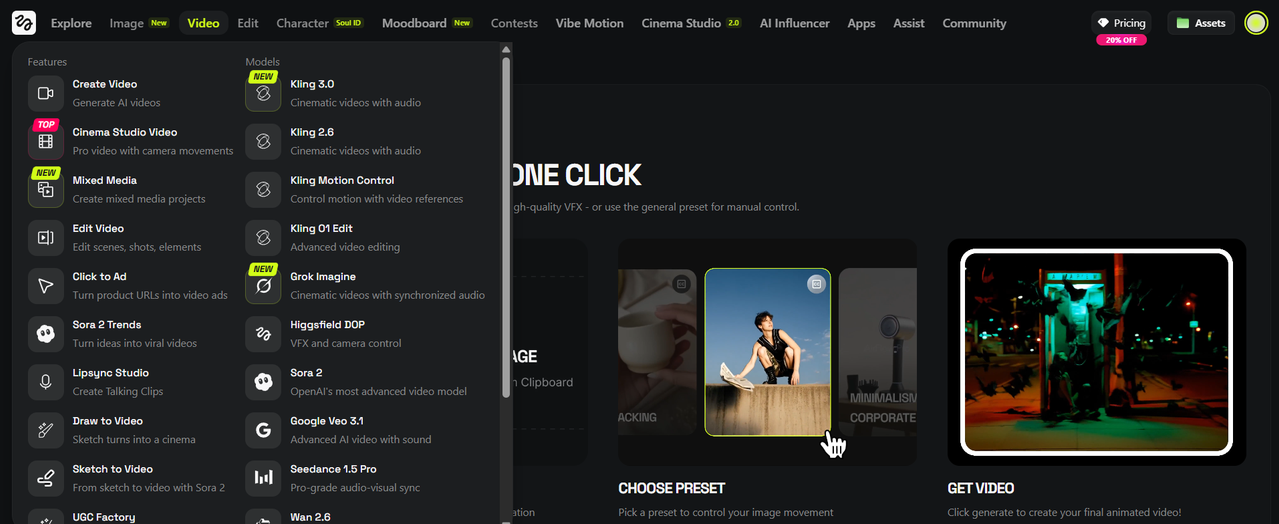

Founded by Alex Mashrabov, former Director of Generative AI at Snap Inc., Higgsfield is not another text-to-video slot machine. It is a full-stack AI production platform that integrates Sora 2, Kling 3.0, and Google Veo 3.1 under one interface, wraps them in professional cinematography tools that no competitor currently matches, and adds a character consistency system — Soul ID — that keeps the same face across every single clip you generate. Cinema Studio 2.0 simulates real lenses and real lighting physics. The Moodboard locks your visual style across an entire session. Over 85 one-click Apps handle everything from UGC ads to AI influencer content without manual configuration.

It is not the easiest AI video platform. It is the most capable one. This review covers everything — features, pricing, real limitations, and whether it is worth your money.

This is the complete review. Every feature, every pricing tier, every strength and every limitation — covered in full.

WRITER’S NOTE: This is one of the most in-depth & most comprehensive reviews of Higgsfield on the Internet. I took my time to cover everything in it. By the time you're done reading, you will know exactly how Higgsfield works, features, pros & cons and how to use it and be better than 99% of users. Hope this helps you out. Let's jump straight in.

P.S: Use the links in this article to claim 20% off Higgsfield AI.

👉 Click Here To Get 20% Off Higgsfield

What is Higgsfield AI?

Higgsfield AI is a comprehensive AI media production platform that operates simultaneously as:

A developer of proprietary AI models (Soul, Soul 2.0, Nano Banana, Nano Banana 2/Pro)

An aggregator and interface layer for third-party models (Sora 2, Kling 2.6/3.0, Google Veo 3.1, Wan 2.6, Seedance, MiniMax Hailuo 02)

A production toolkit with specialized apps, effects, and workflows built on top of those models

Think of it this way: if OpenAI's Sora is a powerful engine, Higgsfield is the vehicle chassis, the steering wheel, the GPS, and the custom paint job built around it. The engine matters, but the platform determines the driving experience.

What makes Higgsfield distinctive is its deliberate targeting of two audiences that other platforms treat as mutually exclusive: professional filmmakers and storytellers who need deterministic control over their creative output, and social media power users who need to produce high-volume, platform-ready content quickly. Most AI tools serve one or the other. Higgsfield serves both — through the same interface.

Its core product, Cinema Studio 2.0, is the professional centerpiece. Its Apps library (with dozens of one-click viral content generators) serves the content creator market. Its Soul ID system bridges the gap — giving anyone, from an agency creative director to an independent TikTok creator, the ability to build a consistent AI character and deploy them across every format.

👉 Click Here To Get 20% Off Higgsfield

How Does Higgsfield Actually Work?

Understanding Higgsfield's architecture helps explain why its outputs often feel more controlled than those from comparable tools. The platform separates three things that most competitors bundle together:

1. The Input Layer

You can begin with any of the following:

A text prompt (describe the scene, character, mood, action)

A reference image (your "Hero Frame" — the visual anchor for the generation)

A video clip (for extension, transformation, or style transfer)

A Soul ID character (a saved character profile that locks identity across generations)

A Moodboard (a collection of reference images defining style, color palette, and tone)

2. The Logic and Routing Layer

This is where Higgsfield diverges from simple prompt-to-video tools. Before sending your request to any model, the platform applies several layers:

Seedream Engine (Logical Consistency): Higgsfield's Seedream model family is specifically trained for "intelligent visual reasoning" — meaning it analyzes your prompt for physical plausibility. If you describe a glass shattering, the system understands that shards scatter outward; if you describe a fabric blowing in wind, it knows how different materials (silk vs. denim) move differently. This prevents the "AI warping" artifacts that plague lower-tier generators.

Model Routing: Based on your selected parameters (speed vs. quality, image vs. video, cinematic vs. social), the system routes your request to the most appropriate engine. You can also manually select: Nano Banana 2 for ultra-fast 4K image generation, Kling 2.6 or Kling 3.0 for high-fidelity cinematic video with audio, Sora 2 for long-form coherent storytelling, or Google Veo 3.1 for photorealistic rendering with advanced camera physics.

Soul ID Injection: If you've defined a character, the Soul ID system injects the character's visual signature (facial geometry, distinguishing features, style parameters) into the generation request before it hits the model, ensuring consistency across outputs.

3. The Refinement Layer

After generation, Higgsfield applies:

Proprietary upscalers to bring outputs to 4K resolution with enhanced detail

Sora 2 Enhancer — a dedicated stabilization and resolution-boosting tool specifically for Sora-generated clips, which tend to have consistency issues at frame level

Skin Enhancer — a Pro-tier app that adds natural, realistic skin textures to AI-generated characters, eliminating the "plastic" look common in AI portraiture

👉 Click Here To Get 20% Off Higgsfield

Who Owns and Runs Higgsfield?

Higgsfield AI was founded by Alex Mashrabov, formerly the Director of Generative AI at Snap Inc. (Snapchat). This background is not incidental — it is the philosophical DNA of the entire platform.

At Snap, Mashrabov was working on AR Lenses and consumer-facing AI experiences that needed to be not only technically impressive but culturally resonant. An AI effect that goes viral doesn't just have to look good; it has to feel right for a specific platform, a specific demographic, a specific moment. That sensibility runs through every corner of Higgsfield.

This explains why the platform talks about "cultural fluency" in its model documentation — the Soul 2.0 model, for instance, is explicitly trained to understand fashion aesthetics, urban visual culture, and the kind of imagery that performs well on Instagram Reels and TikTok. This is not an afterthought; it's a design principle inherited from years of building for Snapchat's 300 million daily users.

The company is headquartered at 535 Mission St, 14th floor, San Francisco, CA 94105 — in the heart of the Bay Area AI cluster — and has been actively hiring across machine learning, product, and creative roles.

👉 Click Here To Get 20% Off Higgsfield

Higgsfield Features:

Here is every major feature category, explained in full.

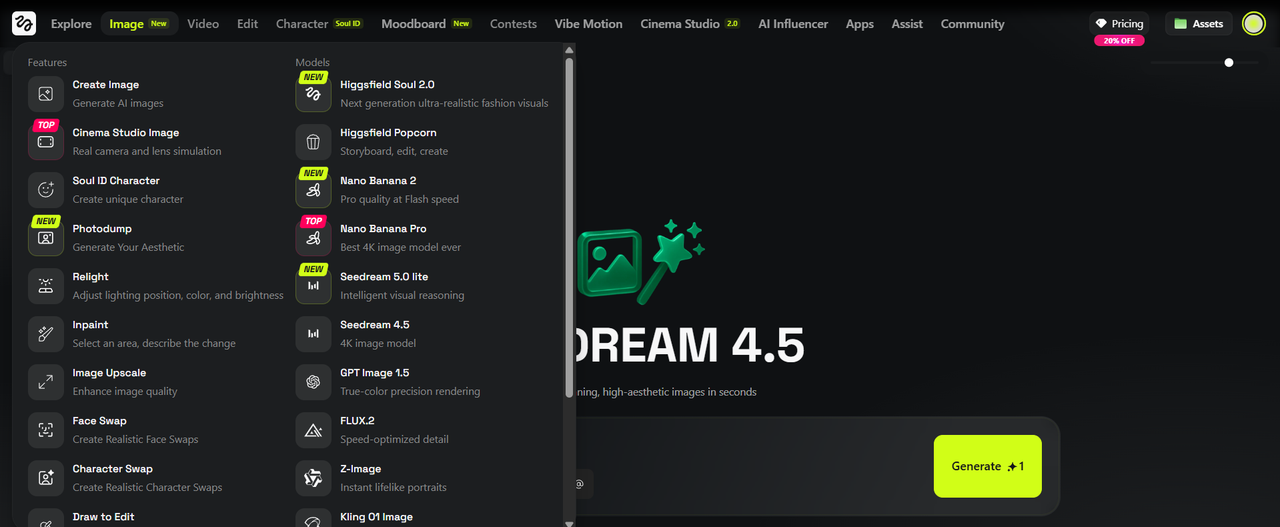

1. Image Generation

Image generation is one of Higgsfield's strongest areas, and the platform offers more model variety here than almost any competitor. Rather than routing everything through a single engine, Higgsfield lets you choose the right model for the specific type of image you're trying to create.

Soul 2.0 is Higgsfield's flagship proprietary photo model and the most distinctive offering in the image section. It was built specifically for fashion, portraiture, and editorial aesthetics — not photorealism in the generic sense, but the kind of intentional, art-directed visual quality you'd associate with a high-end magazine shoot. Soul 2.0 carries what Higgsfield calls "built-in cultural fluency," meaning it understands the visual languages of specific aesthetic communities — streetwear, K-fashion, haute couture, urban editorial — without requiring elaborate prompt engineering to get there. It handles skin tones across a wide range with unusual accuracy, renders fabric behavior realistically (the difference between how silk drapes versus how leather falls), and produces lighting that reads as chosen rather than accidental.

Nano Banana 2 is the newest addition and is positioned as the fastest high-quality image model on the platform. Described as delivering "pro-level quality at Flash speed with subject consistency," it is optimized for creators who need sharp, 4K-quality outputs quickly without sacrificing detail or coherence. Its predecessor, Nano Banana Pro, remains available and is consistently ranked among the top creator-recommended tools on the platform.

Seedream 5.0 Lite brings a different kind of intelligence to image generation. Built around what Higgsfield calls "intelligent visual reasoning," Seedream is specifically trained to understand logical and physical consistency in a scene. If you describe a table with objects on it, the objects sit correctly. If you describe a liquid, it behaves like a liquid. Seedream 5.0 Lite is currently available with unlimited generation access on the platform.

Seedream 4.5 is the previous generation of the same model family — still a strong 4K image option, particularly for scenes requiring accurate spatial logic.

GPT Image 1.5 brings OpenAI's image reasoning capabilities into the Higgsfield interface, useful for conceptual, illustrative, or heavily text-integrated image work.

Flux Kontext and Flux 2 round out the image model roster, providing additional stylistic options for creators who prefer Flux-family outputs for certain aesthetics.

Wan 2.2 Image extends the platform's coverage further, particularly for stylized and illustrated visual styles.

Across all image models, the platform supports Moodboard-guided generation — where a pre-defined collection of reference images constrains the style of every output — and Soul ID injection, where a saved character identity is applied before generation to maintain facial and stylistic consistency across the entire session.

-(5).png)

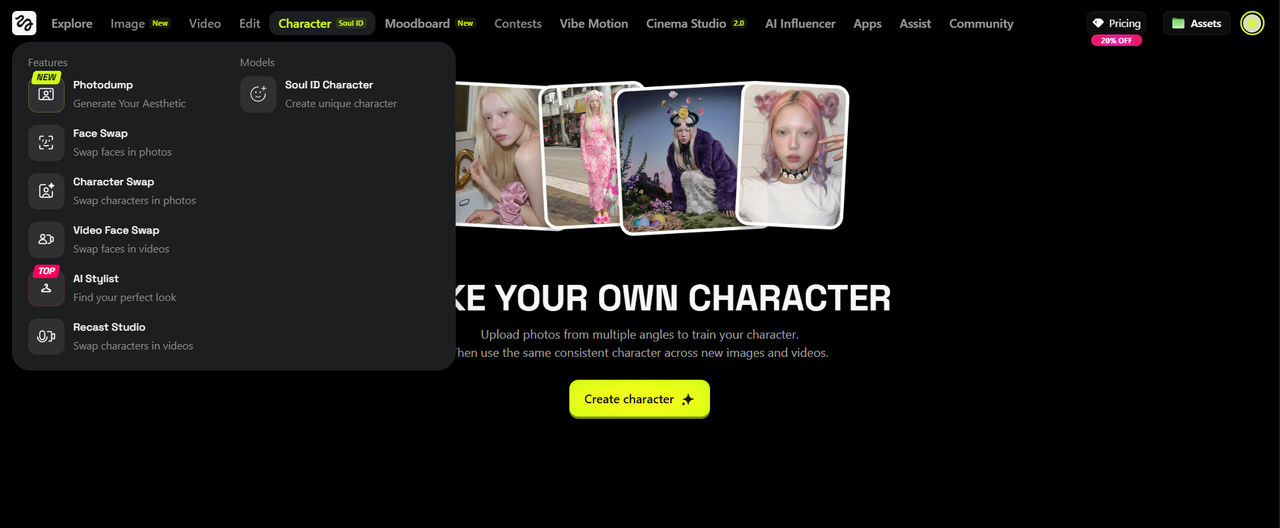

2. Soul ID and Character System

Soul ID is the feature that most experienced Higgsfield users identify as the platform's single most valuable contribution to AI creative work, and for good reason. It solves the most fundamental problem in AI video and image production: identity drift.

When you generate AI content of a character without any consistency system, every generation produces a slightly (or dramatically) different person. The same prompt, run twice, gives you two different faces. This makes it functionally impossible to build a narrative across multiple shots, maintain a consistent brand character, or develop an AI persona with a recognizable identity over time.

Soul ID addresses this by creating a persistent character token — a compressed identity signature derived from your reference images and attribute settings — that is injected into every subsequent generation involving that character. The result is that you can place the same person in a Paris café, on a Tokyo rooftop, in a sci-fi spacecraft interior, or against a plain studio backdrop, dressed in entirely different outfits in each, and their face, their proportions, and their distinguishing features remain consistent across every single output.

Creating a Soul ID is done through the Character section of the platform. You upload reference images of your subject, define key visual attributes, and the system generates the identity token. Once saved, the character can be applied to any image generation, any video generation, and any App in the platform's library that supports character injection.

The Multi-Reference feature extends this further, allowing you to combine multiple visual references in a single generation — useful when you want to blend the face of one reference with the style or body type of another, or when building a character that synthesizes elements from multiple sources.

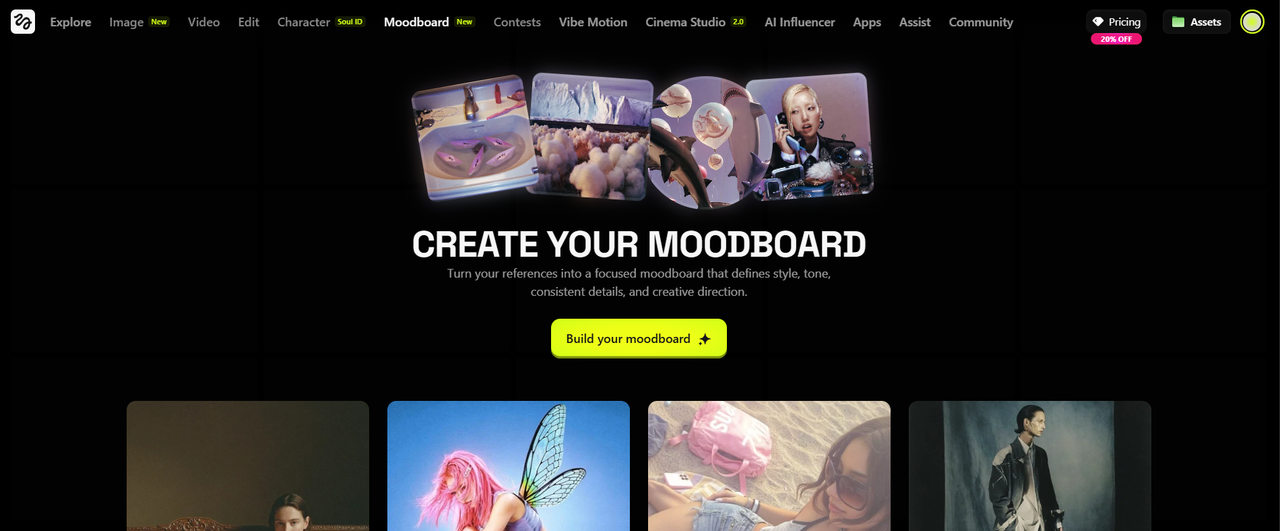

3. Moodboard and Soul Hex

The Moodboard is one of the platform's newest additions and addresses the persistent gap between what creators can describe in words and what they can show in images.

The tool lets you upload a collection of reference images — photographs, artwork, screenshots, editorial images, anything that represents the visual direction you're aiming for — and have the platform analyze them collectively to extract a coherent visual identity. This identity covers color palette, lighting quality and direction, compositional preferences, texture characteristics, tonal mood, and stylistic register.

Once a Moodboard is defined, it functions as a persistent style anchor for any generation you run. Rather than re-describing your desired aesthetic in every prompt, the Moodboard carries that information automatically, ensuring visual consistency across a batch of images or a series of videos without any additional effort per generation.

Soul Hex is a specific capability within the Moodboard ecosystem. It allows you to extract the precise color signatures from any reference image and convert them into a reusable color style. Instead of trying to describe a color palette in words ("warm amber with cool shadow tones and a slight magenta cast in the highlights"), you simply point Soul Hex at a reference image and it captures and encodes those colors directly. From that point forward, every generation using that Soul Hex carries the exact color language you defined.

Together, Moodboard and Soul Hex give creators something that was previously extremely difficult to achieve in AI generation: true stylistic ownership. Your outputs start looking like yours rather than like generic AI content.

-(5).png)

4. Video Generation

Higgsfield's video generation capabilities are built on a combination of its own orchestration layer and integrations with the industry's most capable third-party models. The platform's approach is aggregation rather than exclusivity — rather than building a single video model and locking users into it, Higgsfield provides access to multiple engines and routes generation requests to the most appropriate one based on the task.

Kling 2.6 produces cinematic-quality video with synchronized audio, making it the platform's primary tool for high-fidelity narrative content. Its strength is in photorealistic rendering with natural motion and the kind of output quality that looks genuinely cinematic rather than AI-generated.

Kling 3.0 is the newest version of the Kling model family, building on 2.6's strengths with further improvements to motion coherence and scene consistency.

Kling O1 is a reasoning-enhanced version of the Kling model, designed for more complex generation tasks that benefit from additional computational reasoning before the video is produced.

Kling 2.5 Turbo remains available for creators who need faster generation times on Kling-quality outputs.

Sora 2 from OpenAI is integrated directly into the Higgsfield workflow, accessible through the platform's interface alongside a dedicated Sora 2 Presets library and specialized tools including Sora 2 Upscale and the Sora 2 Enhancer for stabilizing and resolving the flickering and consistency issues that can appear in raw Sora output.

Google Veo 3.1 handles photorealistic physics-compliant rendering and smooth camera tracking. Its integration is particularly valuable for scenes requiring believable real-world physics — liquid, fire, fabric, complex environmental interaction — and for the camera tracking behind Higgsfield's most ambitious motion control sequences.

Wan 2.5 and 2.6 are available for stylized motion aesthetics, particularly useful for content that benefits from a more illustrative or painterly visual quality in motion.

Seedance Pro and Seedance 2.0 from ByteDance bring multi-shot storytelling capabilities, maintaining consistency across longer sequences that change scene or setting.

MiniMax Hailuo 02 rounds out the video model roster, providing another high-quality generation option with distinct stylistic characteristics.

All video models are accessible through the main Create Video interface, and most are also available through the Vibe Motion chat interface, which allows creators to describe their desired video through natural conversational prompts rather than structured parameter inputs.

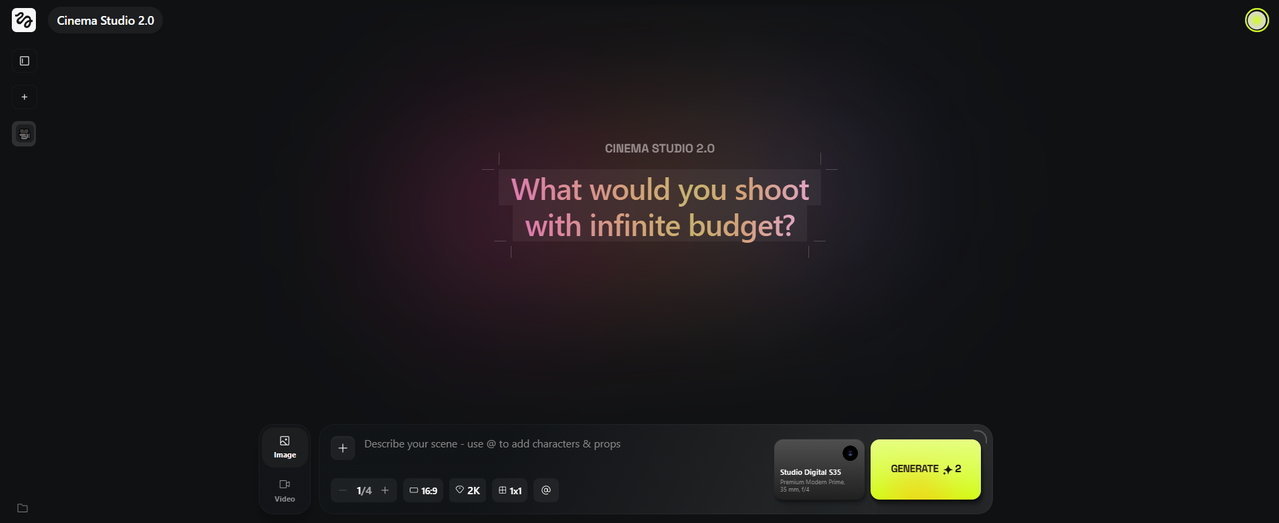

5. Cinema Studio 2.0

Cinema Studio 2.0 is the professional centerpiece of the platform and the feature that most clearly differentiates Higgsfield from every other AI video tool on the market. It is designed not just to mimic the aesthetic of professional cinema but to replicate the actual mental model of a working cinematographer.

Lens Simulation allows users to select from a range of simulated optics. The Anamorphic lens produces the wide, cinematic scope format with characteristic horizontal lens flares and slight barrel distortion — the look associated with prestige cinema. The 35mm lens delivers the versatile, naturalistic film look that reads as "cinematic" across most genres. The 85mm lens is the classic portrait focal length, producing beautiful background compression and flattering subject rendering through shallow depth of field. These are not color filters or post-processing adjustments — they change how the AI constructs the scene, including how light behaves, how focus falls, and how the edges of the frame render.

Lighting Presets operate on the same principle. Golden Hour gives you warm, directional light from a low sun angle with rich shadow and saturated skin tones. Cyberpunk delivers high-contrast neon fill with strong color separation. Cinematic Moody produces desaturated midtones with retained shadow depth, the kind of look that defines contemporary prestige television. These presets influence the actual generation, not just the color grade of the output.

True Optical Simulation extends the realism to optical physics: lens flares that respond to light source position, chromatic aberration at the frame edges, the natural light falloff from center to corner, and the way glass optics interact with practical lights in the scene. These are effects that previously required professional compositing software to achieve.

Frame Rate and Aspect Ratio Control lets users specify 24fps for the motion blur characteristic of film, higher rates for hyper-real or sports aesthetics, and the 21:9 anamorphic aspect ratio for the full widescreen cinema format.

-(5).png)

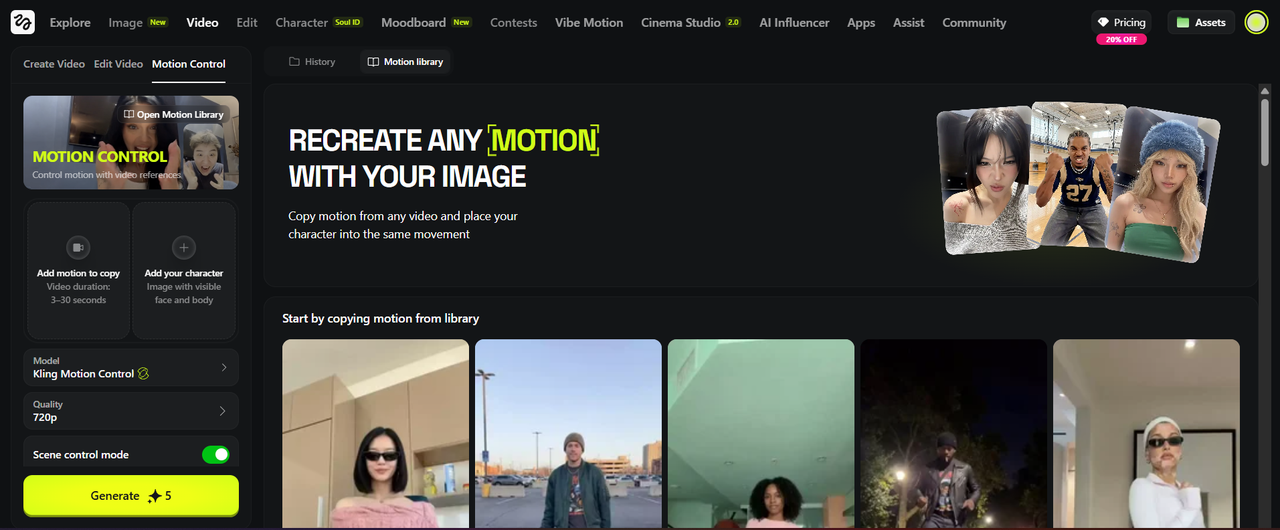

6. Motion Control

Motion control on competing platforms typically means a slider that ranges from "static" to "dynamic." Higgsfield's approach is categorically different and reflects the platform's core commitment to giving creators the tools of a real cinematographer.

The primary tool is the 3D Directional Sphere — an interactive visual interface that lets you plot your camera's actual path through three-dimensional space. You drag a path on the sphere, defining where the camera starts, which direction it travels, at what speed, and where it ends. The camera moves through the generated scene according to this path rather than having the AI interpret a vague motion instruction.

The platform's preset library contains over 70 distinct camera movements organized across categories:

Cinematic presets include the Dolly Zoom (camera moves forward while the lens zooms out, creating the disorienting depth compression made famous by Hitchcock), Aerial Pullback (rises and pulls back to reveal environment), Flying Cam Transition (fast overhead movement), and Trucksition (lateral tracking combined with a transition).

Action and dynamic presets include Fast Sprint, I Can Fly, Bullet Time (frozen-time 360-degree effect), Train Rush, and Hero Flight.

Transition presets include Raven Transition, Melt Transition, Smoke Transition, Splash Transition, Roll Transition, Seamless Transition, Jump Transition, Hole Transition, Display Transition, Hand Transition, and Flame Transition — each producing a distinct visual handoff between shots.

Kling Motion Control adds a precision tier beyond camera direction. Rather than just moving the camera, Kling Motion Control lets you direct a character's physical actions and facial expressions at the frame level — specifying body movements, gestures, and expressions — for sequences up to 30 seconds in length. With Kling Motion Control you can upload a reference image and a reference video. Automatically, your movements in the reference image will be transferred to the reference image which is super cool.

Google Veo 3 integration handles the physics of camera movement itself — ensuring that spatial relationships between objects remain consistent across a moving shot and that the camera's inertia, momentum, and deceleration feel physically believable.

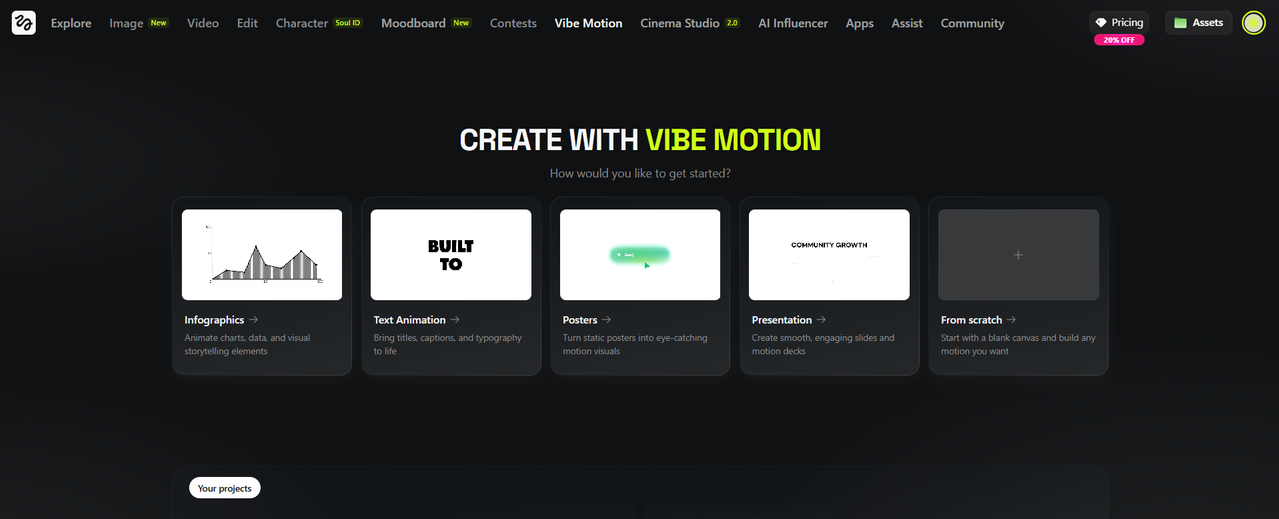

7. Higgsfield Vibe Motion:

If you've been wanting to make motion graphics that grab attention. Text animations, infographics, etc., then Higgsfield has a dedicated section for it.

With Higgsfield Vibe Motion you can create:

Infographics: Animate charts, data and visual storytelling elements.

Text Animations: Bring titles, captions, and typography to life.

Posters: Turn static posters into eye-catching motion visuals.

Presentation: Create smooth, engaging slides and motion decks

And you can also start from scratch.

8. Visual Effects Library

The Visual Effects library is a collection of pre-built motion effects that can be applied to any image or character. Each effect is a self-contained generation workflow — select it, upload your source image, and the platform handles everything else.

The library currently contains well over 80 named effects organized across thematic categories:

Elemental effects include Air Bending, Water Bending, Earth Wave, Earth Element, Earth Zoom Out, Fire Element, Water Element, Firelava, Flame On, and Flame Transition — all simulating interaction with or transformation through natural forces.

Transformation effects include Animalization (human to animal), Cyborg, Werewolf, Monstrosity, Turning Metal (multiple variants), Gas Transformation, and Disintegration — surreal body transformations at varying degrees of intensity.

Supernatural and cosmic effects include Northern Lights, Plasma Explosion, Atomic, Clone Explosion, Building Explosion, Thunder God, Portal, Multiverse, Luminous Gaze, Saint Glow, Innerlight, and Diamond.

Horror and dark effects include Horror Face, Spiders from Mouth, Head Explosion, Head Off, Black Tears, Shadow Smoke, and Glitch.

Nature and organic effects include Sakura Petals, Nature Bloom, Garden Bloom, Ice Rose, Cotton Cloud, Aquarium, Glowing Fish, Balloon, and Wonderland.

Entertainment and social effects include Live Concert, Tattoo Animation, Duplicate, Objects Around, Shadow, Buddy, Polygon, Wireframe, Collage, 3D Rotation, Levitation, and Look BOOM.

Transition effects — as listed in the Motion Control section — are also accessible from the Visual Effects library.

-(5).png)

9. Higgsfield Apps

The Apps section is a library of specialized, pre-configured content workflows — each one designed to produce a specific type of content with minimal setup. They are best understood as professional presets that encapsulate complex prompt engineering, model configurations, and post-processing into a single streamlined interface.

The library currently contains over 85 named Apps organized across functional categories:

Character and identity apps include Face Swap (instant photo face swap), Video Face Swap (the video equivalent), Character Swap 2.0 (full character replacement), Recast (industry-leading character swap for any existing video), Commercial Faces, and Chameleon.

Fashion and styling apps include AI Stylist, Outfit Swap, Outfit Shot, Latex, Skin Enhancer (Pro), and Angles 2.0 — the last of which generates any angle view of any image in seconds, critical for product photography and character development.

Commercial and advertising apps include Click to Ad (paste a product URL, receive a video ad), Packshot (professional product photography), Billboard Ad, Truck Ad, Kick Ad, Graffiti Ad, Volcano Ad, Fridge Ad, Giant Product, Macro Scene, and Macroshot Product.

ASMR apps include ASMR Classic, ASMR Host, ASMR Promo, and ASMR Add-On — each producing a different variant of ASMR-style content for different platform and content contexts.

Viral and entertainment apps include Transitions, Bullet Time Scene, Bullet Time White, Bullet Time Splash, Rap God, Skibidi, Japanese Show, J-Magazine, J-Poster, Renaissance, Comic Book, Pixel Game, GTAI, Giallo Horror, Ghoulgao, Mugshot, Idol, Cosplay Ahegao, and many others — covering a wide range of trending social media content formats.

Lifestyle and creative apps include Behind the Scenes, Urban Cuts, ClipCut, Style Snap, Paint App, Plushies, Glitter Sticker, Signboard, Simlife, Micro-Beasts, Melting Doodle, Cloud Surf, Mukbang, On Fire, Sketch-to-Real, 3D Render, 3D Figure, 3D Rotation, Burning Sunset, Roller Coaster, Brick Cube, 60s Café, Victory Card, Sand Worm, Storm Creature, Mascot, Social Media Icon, Banana Eating, Yes Kiss, Magic Button, and What's Next.

Photography and production apps include Shots, Zooms, Relight, Poster, Breakdown, Nano Strike, Nano Theft, and Game Dump.

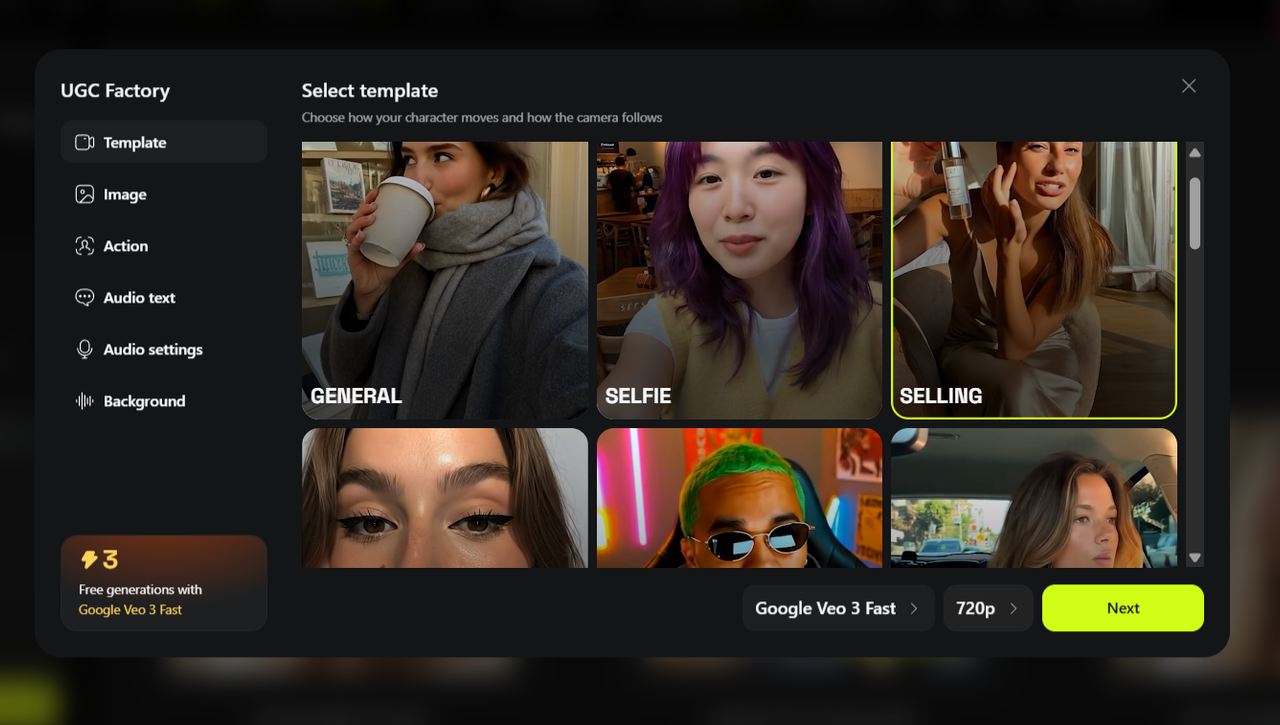

10. Lipsync Studio and Talking Avatars

The Lipsync Studio allows users to generate lip-synced talking video from any still image — including Soul ID characters, uploaded photos of real people (with appropriate consent), or AI-generated portraits. You provide a script as text or audio, select voice characteristics including gender, accent, and emotional tone, and the system generates a video of the character speaking your content with synchronized lip movement and appropriate facial expression.

Kling Avatars 2.0 integration adds a further layer of expressiveness, supporting gesture, full facial expression range, and body language that matches the emotional register of the spoken content — moving beyond simple lip sync into what is effectively full talking avatar performance.

The UGC Factory extends the Lipsync Studio into a full UGC video production pipeline: character selection, script delivery, environment selection, and background audio, all in one workflow — producing complete UGC-style video content suitable for immediate posting or use in ad campaigns.

-(5).png)

11. Mixed Media

Mixed Media is a distinct creative mode on Higgsfield that combines real footage or photography with AI-generated stylistic overlays, transforming source material into a wide range of artistic visual formats.

The Mixed Media preset library includes over 30 named styles: Sketch, Canvas, Noir, Paper, Flash Comic, Overexposed, Particles, Hand Paint, Toxic, Tracking, Ultraviolet, Windows, Acid, Palette, Comic, Akrill, Two Color, Multiverse, Fragments, Origami, Random Glow, Vintage, Cannabis, Bubbles, Magazine, Cold Vision, Modern, LSD, Broken Mirror, Lava, Marble, Ocean, and Layer Mixed Media.

Each preset transforms your source image or video into that aesthetic style while retaining the compositional and subject integrity of the original. Mixed Media is particularly popular for social media content that needs to stand out visually without requiring elaborate original generation.

12. Editing Tools

Beyond generation, Higgsfield provides a meaningful set of editing and refinement tools:

Edit Image uses brush-based inpainting — you paint over the area you want to change, describe the replacement in text, and the model fills that area with a new generation that matches the surrounding context. Powered by Soul Inpaint and Nano Banana Pro Inpaint.

Draw to Edit takes this further, letting you sketch directly on an image to indicate compositional changes before the model executes them.

Draw to Video allows rough sketch input to define the motion path or scene layout of a video generation — bridging the gap between hand-drawn storyboarding and AI generation.

Kling Video Edit provides advanced post-generation video editing, including extending clips, modifying specific sections, and applying motion edits to existing video content.

Banana Placement and Product Placement are specialized editing tools for inserting objects — products, logos, props — into existing images or video with natural integration. Product Placement is particularly valuable for e-commerce and commercial production.

Multi-Reference editing allows multiple reference images to be combined in a single edit operation, enabling complex compositional changes that draw from several sources simultaneously.

Sora 2 Upscale is a dedicated upscaling tool specifically optimized for Sora-generated content, addressing the resolution and stability characteristics of that model's output.

13. Upscaling

The Upscale tool, powered by Topaz technology, enhances both images and videos to 4K resolution with detail restoration and quality improvement. It is a standard final step in most serious production workflows on the platform — generate at standard resolution for speed and cost efficiency, then upscale the chosen output for final delivery quality.

A dedicated Video Upscale handles the additional complexity of upscaling video, maintaining temporal consistency (so the upscaling doesn't introduce flickering or artifacts between frames) alongside the spatial quality improvement.

-(5).png)

14. Higgsfield Popcorn (Storyboard Generator)

Popcorn is the platform's AI storyboarding tool, found under the Image section at /storyboard-generator. It is designed to solve the fundamental challenge of narrative coherence across a multi-shot AI sequence.

You define a narrative arc — the story beats you want to hit — and Popcorn generates a sequence of consistent storyboard frames that follow that arc. Each frame respects the compositional logic of the previous one: characters maintain position and identity, the environment evolves naturally, and lighting follows a coherent through-line.

Once complete, the storyboard can be baked directly into video, using the approved frames as generation keyframes. This means the AI fills motion between compositions you've already approved, rather than inventing the scene from scratch. For anyone producing short films, ad campaign concepts, or content series, Popcorn dramatically reduces the number of generation iterations required to reach an approved final cut.

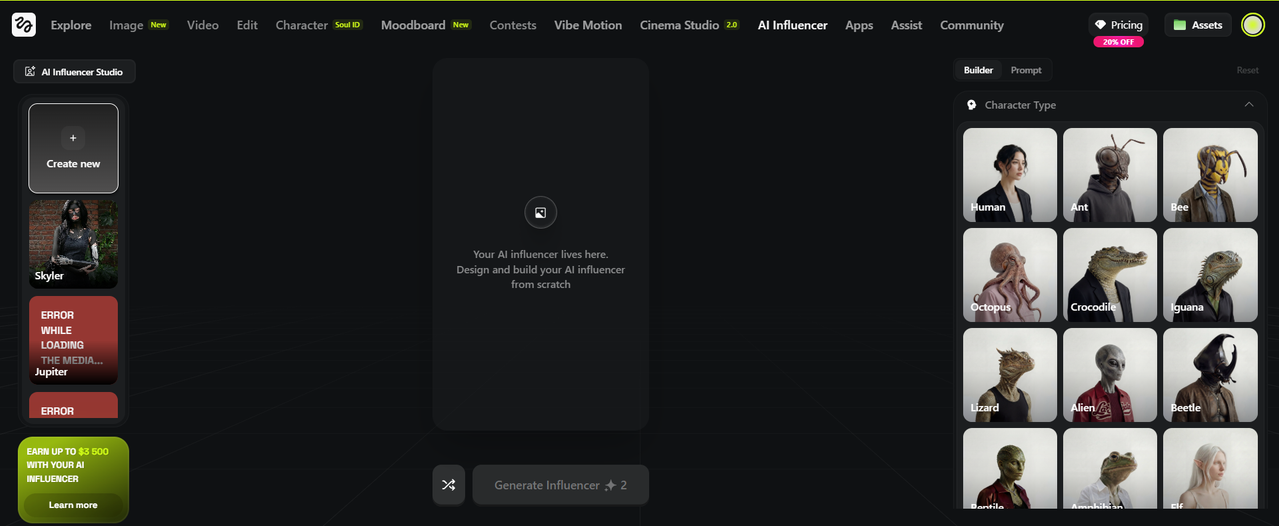

15. AI Influencer Studio

The AI Influencer Studio is Higgsfield's dedicated environment for building, managing, and deploying permanent AI character personas for social media and commercial use. It is powered by Soul ID and provides a structured workflow for everything from initial character design through to deployment-ready content across multiple formats.

Once a character is created and saved, the studio enables placement of that character in any environment, any outfit, and any content format — lifestyle images, talking video, commercial ads, editorial portraits — while maintaining the consistent identity that makes the character recognizable across all of it. Agencies using the studio for client campaigns can manage multiple distinct AI characters within the same account.

The studio integrates directly with Lipsync Studio for speaking content, Click to Ad for commercial production, and the broader Apps library for format-specific content creation.

16. Higgsfield Assist (AI Chat Copilot)

Higgsfield Assist is the platform's built-in AI chat interface, accessible via /chat. It functions as a production copilot — answering questions about platform capabilities, helping refine prompts, providing guidance on model selection for specific creative goals, and assisting with workflow planning.

For new users navigating a complex platform, Assist serves as a practical onboarding resource. For experienced users, it is most useful for rapid prompt iteration and for resolving questions about specific model behavior without leaving the platform.

-(5).png)

17. Fashion Factory and Photodump Studio

Fashion Factory is a dedicated image generation environment for fashion-specific content, providing structured inputs for garment description, model characteristics, environment, and styling that streamline the production of fashion imagery compared to general-purpose prompting.

Photodump Studio generates batches of casual, authentic-feeling lifestyle images in a single workflow — producing the kind of multi-image "photo dump" content that performs well on Instagram and TikTok as evidence of an organic, active social presence.

18. Reference Extension (Chrome)

The Higgsfield Reference Extension, available on the Chrome Web Store, allows users to capture visual references directly from any webpage — a photograph, a color palette, a composition, a lighting setup — and add them to their Moodboard or active generation workflow without downloading and re-uploading the image. It is a small but meaningfully practical tool for creators who do visual research in the browser and want to move seamlessly from reference discovery to creative execution.

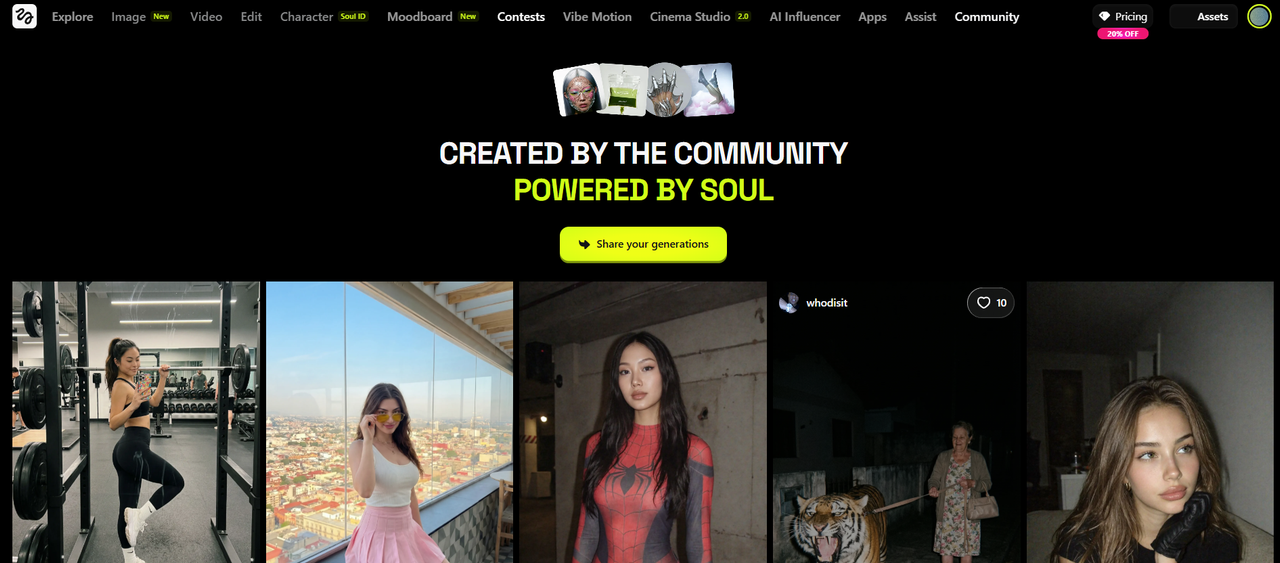

19. Community and Social Features

The Community section of the platform serves as a gallery, discussion hub, and showcase space. Creators share generated content, exchange prompting strategies, discuss model behavior, and provide feedback to the development team.

The platform's Contests section hosts creative competitions with substantial prize pools — the contest as of writing this review, "Make Your Action Scene," carries a $500,000 prize pool. Contest submissions are visible in the community and can accumulate significant views independently of the prize competition.

The Ambassador Program offers community members who develop significant platform expertise and audience an opportunity to represent Higgsfield in exchange for credits, early access to new features, and revenue-sharing considerations.

The platform's Discord (discord.gg/higgsfield) is the fastest-moving community channel, where the core team is actively present and major announcements, credit giveaways, and feature launches often appear before they reach the main website.

-(5).png)

Higgsfield UGC Builder:

A specialized tool for marketers. It allows you to upload a photo of a person (or yourself) and generate a User-Generated Content (UGC) style video. You can select specific accents, emotions, and background sounds, essentially creating a virtual brand ambassador who "speaks" and acts out your script.

Higgsfield AI Influencer:

Using Soul ID, Higgsfield allows creators to build permanent "AI Influencers." Once a character is saved, you can place them in any location or outfit while maintaining 100% facial consistency, making it a favorite for agencies managing virtual talent on Instagram and TikTok.

What is Higgsfield Soul:

Soul is Higgsfield’s proprietary aesthetic model. It is specifically tuned for fashion, beauty, and portraiture. While models like Kling are "realistic," Soul is "aesthetic," focusing on skin textures, fabric draping, and lighting that looks like it belongs in Vogue.

What is Higgsfield Popcorn?

Popcorn is the platform’s AI Storyboarding tool. It allows you to generate a sequence of 8–10 consistent images that follow a narrative arc. Once the storyboard is finished, you can "bake" it directly into a video, ensuring the final animation follows the exact composition of your storyboard.

Higgsfield Contests:

Higgsfield runs one of the most well-funded contest programs in the AI content space, with prize pools that have grown rapidly since launch:

Soul Contest — $10,000

Higgsfield Spotlight — $15,000

Higgsfield Max — $20,000

Global Teams Challenge — $100,000

Make Your Action Scene — $500,000

Each contest runs around a specific creative brief, is open to all users regardless of subscription tier, and submissions are judged on technical quality, creative concept, and storytelling strength. Many top entries from previous contests have already crossed 100,000 views — meaningful exposure entirely separate from the prize money itself.

For creators serious about AI filmmaking, Higgsfield's contests are currently the best combination of financial reward, creative challenge, and public exposure available anywhere in the industry. You can browse all active and past contests at higgsfield.ai/contests.

👉 Click Here To Get 20% Off Higgsfield

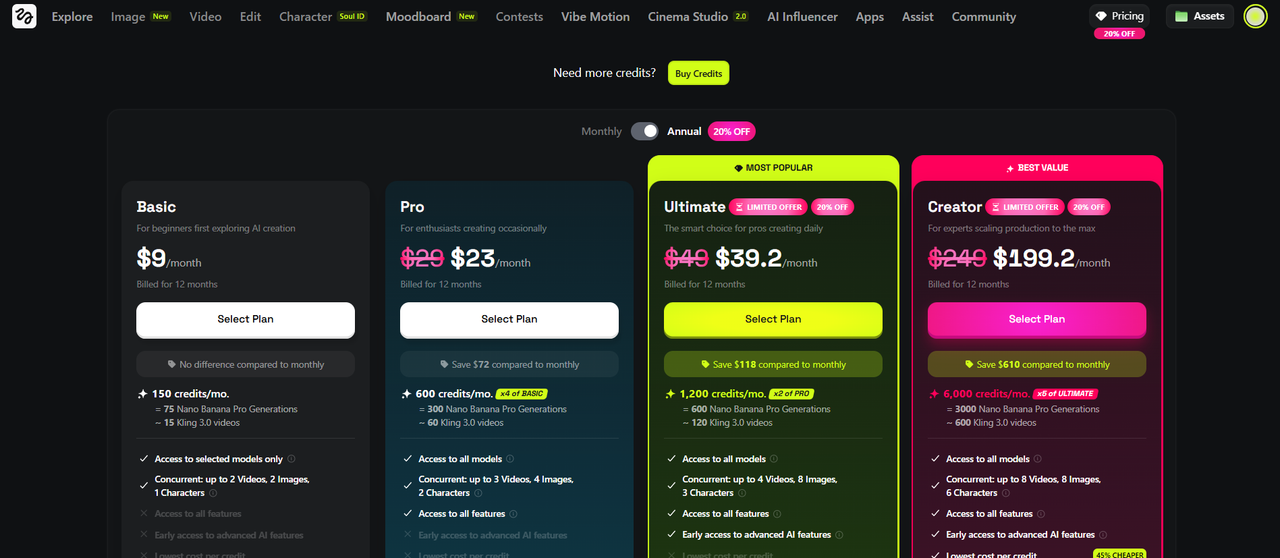

Higgsfield AI Pricing:

Higgsfield uses a credit-based subscription model with multiple tiers. Credit costs vary by model and resolution — faster/lighter models consume fewer credits; premium models (Sora 2, Kling 3.0, Veo 3.1) consume significantly more.

Here is the full breakdown:

Subscription Plans (Billed Annually):

Free — $0/month

The free plan gives you full access to the platform interface — every tool section is visible and navigable — but limits what you can actually run. You receive 150 monthly credits, 2 image jobs, 2 video jobs, and 1 character job per month. Outputs carry a Higgsfield watermark. Premium models including Veo 3.1, Seedance, and 4K generation tiers are locked. No commercial rights. Despite its limits, the free plan is genuinely useful for testing the interface, workflow, and generation quality before committing financially.

Basic — $9/month (billed annually)

An entry-level paid tier suitable for casual users who need more than the free plan allows. Watermark removal on paid generations, access to more models, and commercial rights for generated content. The credit volume is still limited, making this tier most practical for low-frequency personal use rather than production work.

Pro — $17.40/month (billed annually)

Approximately 600 credits per month. Access to premium models including Sora 2 and Kling, commercial rights, higher resolution outputs, and priority rendering. This is the realistic minimum entry point for creators who intend to use the platform regularly for professional content. Worth noting: one Sora 2 video alone can consume 50+ credits, so 600 credits requires disciplined management.

Ultimate — $29.40/month (billed annually)

Approximately 1,200 credits per month. Full model access, higher rendering priority, and access to Cinema Studio's advanced features. Independent testing by one review team found that generating 15 quality videos using Cinema Studio with upscaling consumed their entire 1,200 monthly credit allocation — a useful real-world benchmark for planning.

Creator — $119/month (billed annually)

Approximately 6,000 credits per month. Designed for agencies, high-volume creators, and production teams. All models, fastest rendering priority, and the broadest feature access. For context, Runway's comparable plan runs $95/month with unlimited Standard generations — making this tier a harder value case unless the specific multi-model access and Cinema Studio capabilities are essential to your workflow.

Enterprise / Custom

Tailored pricing for organizations with specific requirements. Includes API access, pooled team credits, dedicated support, custom workflow integration, and granular admin controls. Bookable via demo at higgsfield.ai/enterprise.

Team Plan

Multi-seat access with pooled credits — the most practical structure for agencies where multiple team members need simultaneous platform access and credit consumption needs to be managed collectively rather than per individual.

Credit Packs

On top of any subscription, users can purchase one-time Credit Packs for additional generation volume. For example, 80 credits for approximately $5. Important to know: Credit Packs expire after 90 days from purchase and do not auto-renew. They are consumed alongside (not instead of) your subscription credits.

Pay-Per-Generation (via Segmind API)

For developers integrating Higgsfield models into their own applications or automated pipelines, the Segmind API offers pay-per-generation pricing rather than a monthly subscription. Rates run from approximately $0.12 per image to $0.86+ per premium video generation depending on the model and output specifications.

Pricing Summary Table

Plan | Monthly Cost (Annual) | Credits/Month | Best For |

Free | $0 | 150 | Testing and exploration |

Basic | $9 | Limited | Casual personal use |

Pro | $17.40 | ~600 | Regular professional use |

Ultimate | $29.40 | ~1,200 | Power users and small teams |

Creator | $119 | ~6,000 | Agencies and high-volume production |

Enterprise | Custom | Custom | Large organizations |

Important credit notes:

Monthly subscription credits do not roll over — they expire at the end of the billing cycle

Separately purchased Credit Packs typically have a 90-day shelf life

High-fidelity models like Sora 2, Kling 3.0, and Veo 3.1 can consume credits quickly; budget accordingly for heavy use

The platform also operates on partner networks (e.g., Segmind), where pay-per-generation pricing ranges from approximately $0.12 for images to $0.86+ for premium video

For teams managing a consistent content pipeline, the Team Plan with pooled credits is likely the most economical approach. For individual power users, tracking model-specific credit costs before committing to a tier is strongly recommended.

👉 Click Here To Get 20% Off Higgsfield

How to Use Higgsfield: A Step-by-Step Workflow

For Image Generation

Select your model: Soul 2.0 for fashion/editorial aesthetics; Nano Banana 2/Pro for speed and 4K resolution; Seedream 4.5/5.0 for logical consistency in complex scenes; GPT Image for conceptual or illustrative work.

Upload a reference (optional but recommended): The closer your reference is to your desired output, the more deterministic the result.

Define your character (optional): If you're generating a specific person, use or create a Soul ID to lock their identity.

Apply a Moodboard (optional): For stylistic consistency across a series.

Write your prompt: Use technical, descriptive language (see Prompting section below).

Generate and refine: Use the Edit Image, Inpaint, or Draw-to-Edit tools to adjust specific areas of the output.

Upscale: Use the Topaz-powered upscaler to reach final output resolution.

For Video Generation

Select your starting point: Text-to-video, image-to-video, or video-to-video transformation.

Choose your model: Kling 2.6/3.0 for cinematic realism with audio; Sora 2 for long-form coherent storytelling; Wan 2.6 for stylized motion; Veo 3.1 for photorealistic physics.

Define camera movement: Use the 3D Directional Sphere or select from motion presets.

Configure lens and lighting (Cinema Studio): Set your lens type, lighting preset, and frame rate.

Inject Soul ID (if applicable): Ensure your character is applied to the generation.

Generate: Start with a shorter clip to verify the composition before committing credits to a longer generation.

Apply VFX (optional): Layer visual effects from the library onto the clip.

Upscale and stabilize: Run through the video upscaler; if using Sora 2 output, apply the Sora 2 Enhancer for stabilization.

Prompting on Higgsfield: A Technical Guide

Higgsfield's models, particularly Nano Banana Pro and Cinema Studio, are trained on professional cinematography data. This means they respond significantly better to technical cinematic language than to casual descriptive prompts.

Prompt Structure

The platform separates prompts into functional components:

Subject/Character: Who or what is in the scene

Action: What is happening

Camera: Lens, angle, movement

Lighting: Source, quality, mood

Style/Aesthetic: Visual references, post-processing look

Technical specs: Frame rate, resolution feel, grain level

Prompt Comparison

Quality | Example |

Weak | "A woman dancing at a club" |

Better | "A woman in silver sequins dancing in a nightclub, dramatic lighting, slow motion" |

Professional | "Close-up shot, 85mm lens, anamorphic flare, woman in silver sequins, volumetric fog, purple and teal neon bounce light, 24fps, high-motion blur, consistent character [SoulID_Alpha], cinematic moody preset" |

Key Technical Terms That Improve Results

Lens specification: "85mm portrait," "24mm wide," "anamorphic scope"

Light quality: "soft diffused," "hard side light," "rim light," "practical neon fill"

Depth of field: "shallow bokeh," "deep focus," "rack focus mid-shot"

Movement: "handheld verité," "locked-off static," "slow push in," "dutch angle"

Film aesthetics: "16mm grain," "Super 8 gate weave," "RED cinema flat," "Kodak 5219 emulation"

Tempo: "24fps cinematic," "48fps hyper-real," "overcranked slow motion"

The platform's Prompt Guide (available at /nano-banana-pro-prompt-guide) provides extensive model-specific guidance and is worth reading before beginning serious creative work.

👉 Click Here To Get 20% Off Higgsfield

Higgsfield Pros & Cons:

Pros:

Character Consistency is the platform's crown jewel. No other consumer-facing tool matches Soul ID's ability to maintain a character's identity across multiple shots, models, and creative scenarios. For anyone building an AI film, a content series, a virtual influencer, or a brand character, this is transformative.

Model Choice and Flexibility means you're never locked into a single engine. When Sora 2 is better for your use case, use Sora 2. When Kling's cinematic quality is what you need, use Kling. The platform aggregates them all under one credit system and one interface.

Professional-Grade Camera Control puts Higgsfield in a class of its own for filmmakers. The 3D Directional Sphere, lens simulation, and Cinema Studio presets replicate the mental model of a real cinematographer in a way that no other AI tool currently matches.

Physics and Logical Consistency via the Seedream engine means fewer of the "liquid face" artifacts, warping body parts, and physically impossible motions that define lower-tier generators.

Cultural and Aesthetic Intelligence in Soul 2.0 means the platform produces imagery that feels contemporary and platform-native — not the generic, slightly-off-brand look that signals "AI-made" to experienced content consumers.

Cons:

Learning Curve: Cinema Studio 2.0 is genuinely complex. New users who arrive expecting a simple text box will be overwhelmed. The platform rewards investment in learning but does not ease you in gently.

Credit Economics: Premium models — particularly Sora 2, Kling 3.0, and Veo 3.1 — consume credits at a rate that can feel punishing for iterative creative work where you're generating and discarding multiple attempts to get one keeper shot.

Subscription Complexity: The combination of plan tiers, per-model credit costs, separate Credit Pack expiration rules, and partner-platform pricing creates a system that requires careful management to avoid unexpected shortfalls mid-project.

Mobile Limitations: The mobile app is well-designed, but significant Cinema Studio features remain desktop-only. Creators who work primarily on mobile will find the experience more limited.

👉 Click Here To Get 20% Off Higgsfield

Who Should Use Higgsfield?

The platform is unambiguously worth it for:

Professional filmmakers and video directors exploring AI augmentation

Content agencies producing high-volume social media material

Brands building AI influencer or virtual spokesperson campaigns

Individual creators with a defined visual identity they need to maintain across content

Marketers who need to produce video advertisements quickly and at scale

It may be overkill for:

Casual users who want to occasionally make "something cool" with AI

Writers or researchers who just want quick visual illustrations

Teams with very tight budgets who can't absorb the credit cost

Does Higgsfield AI have an app?

Yes. Higgsfield is one of the few platforms that is mobile-first. The "Diffuse" app is available on iOS (and Android in select regions), offering a streamlined version of the web tools for creators on the go.

Does Higgsfield have an API?

Yes. Higgsfield offers a robust Python SDK and API for developers. It allows businesses to integrate Soul ID, video generation, and speech-to-video capabilities into their own applications or automated workflows (e.g., via Make.com).

Do Higgsfield credits roll over?

Generally, no. Monthly subscription credits usually expire at the end of the billing cycle. However, "Credit Packs" (extra credits purchased on top of a plan) often have a longer shelf life, typically around 90 days.

👉 Click Here To Get 20% Off Higgsfield

Higgsfield Customer Service:

Support is handled primarily through two channels:

Email: support@higgsfield.ai for billing and technical issues.

Discord: Their community server is the fastest way to get help from the core team and fellow creators.

Higgsfield AI Social Media Handles:

Higgsfield maintains an active presence across all major social platforms. Here are the official handles:

X / Twitter: @higgsfield_ai

Instagram: @higgsfield.ai

YouTube: @HiggsfieldAI

TikTok: @higgsfield_ai

LinkedIn: Higgsfield

Discord: discord.gg/higgsfield

Each channel serves a distinct purpose. Instagram and TikTok showcase the platform's best community-generated content and trending creative formats. YouTube carries tutorials, model launch announcements, and feature walkthroughs. X/Twitter and LinkedIn handle news, product updates, and industry commentary. Discord is the most operationally important channel — it is where the core team is most active, where credit giveaways and early feature access are announced first, and where creators get the fastest answers to technical and workflow questions.

If you follow Higgsfield on only one platform, make it Discord.

Is Higgsfield AI free?

It offers a freemium model. You can start for free with a daily allotment of credits, but these are often limited to lower-tier models (like Nano Banana) and include a watermark. Commercial use and the "Cinema Studio" require a paid plan.

Is Higgsfield worth it?

Yes, if you are a creator who needs control. If you just want to see "cool AI videos," cheaper tools exist. But if you are a professional who needs a character to look the same in five different shots, Higgsfield’s consistency tools make it worth every penny.

Is Higgsfield censored?

Higgsfield maintains platform-level safety filters to prevent illegal or harmful content. However, they recently integrated Z-Image (an open-source model), which is significantly less restrictive, allowing for more "artistic freedom" and niche aesthetics that strict commercial filters often block.

Is Higgsfield good?

It is currently considered one of the top 3 AI video platforms. Its ability to act as a "hub" for other models while providing its own specialized "Soul" and "Popcorn" tools makes it a versatile powerhouse.

Is Higgsfield AI Legit?

Yes, Higgsfield AI is completely legitimate. Here's the proof across the key trust indicators:

Company: Registered business headquartered at 535 Mission Street, 14th Floor, San Francisco, CA 94105. Founded by Alex Mashrabov, former Director of Generative AI at Snap Inc. — a verifiable, publicly documented professional with a real career history.

Product: Fully functional and actively developed. Its integrations with OpenAI (Sora 2), Google (Veo 3.1), and Kling require legitimate commercial partnerships — not something a fraudulent platform could obtain.

Community: Large, active, and verifiable user base across Discord, Instagram, TikTok, YouTube, and X/Twitter with genuine creator submissions, real engagement, and a current contest drawing hundreds of thousands of views.

Reviews: 3.2 out of 5 on Trustpilot across 1,200+ verified reviews. The negative feedback centers on billing transparency and customer service speed — the frustrations of a real product, not the red flags of a scam.

The bottom line: Higgsfield is a real company with real technology and real users. You pay for a plan, you get what is advertised. Its flaws are the growing pains of an ambitious platform, not evidence of fraud.

👉 Click Here To Get 20% Off Higgsfield

Higgsfield customer reviews:

Here are some customer reviews:

Conclusion: Higgsfield AI Review

Higgsfield AI is the most complete AI video and image production platform available. It combines proprietary models (Soul 2.0, Nano Banana 2), third-party engines (Sora 2, Kling 3.0, Google Veo 3.1), and professional cinematography tools (Cinema Studio 2.0, Motion Control, Soul ID) into a single ecosystem built for creators who need control, consistency, and quality at the same time.

Higgsfield AI is worth it for creators who need:

A single platform that accesses multiple AI models without switching tools

Character consistency across multiple shots via Soul ID

Professional camera control through Cinema Studio 2.0 and the 3D Directional Sphere

One-click commercial content through 85+ Apps including Click to Ad, Face Swap, and Recast

A free starting point with a genuine upgrade path as production needs grow

Higgsfield AI is not the right tool for:

Casual users who want occasional AI video without a learning curve

Creators on tight budgets who generate high volumes of premium model content

Post-production professionals whose primary need is editing rather than generation

The bottom line: Higgsfield AI earns a strong 8.5 out of 10. Its Soul ID character system is unmatched in the market, its multi-model flexibility means you are never locked into a single engine, and Cinema Studio 2.0 gives filmmakers AI tools that actually speak their language. The platform's main limitations — a steep learning curve, credit economics that require management, and customer support that doesn't yet match the professional positioning — are real but surmountable. No other single platform combines this breadth of capability with this level of creative control. For professional creators, marketing agencies, and serious AI filmmakers, Higgsfield is the current gold standard.

👉 Click Here To Get 20% Off Higgsfield

- Published on Mar 01, 2026

- 147 views

- 0 comments

- Print this page

- Back to Methods